- Sep 25, 2025

Scaling Web Apps - Asynchrony

In this article we will show how to avoid idle threads that wait for network / io operations with the use of asynchronous code. In this way our threads can be squeezed for the maximum of their performance.

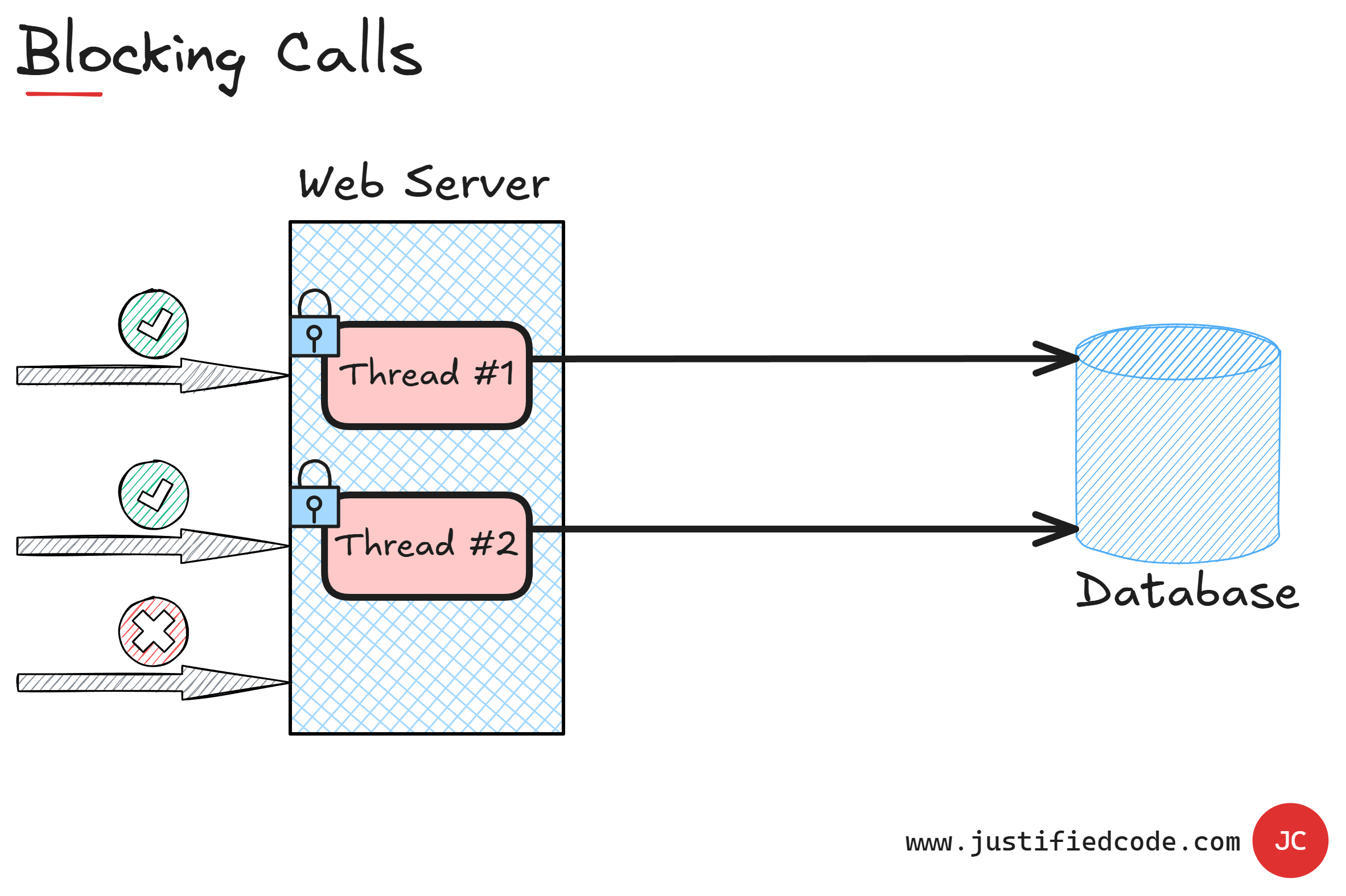

Blocking Calls

As we have seen in the enemies of scalability article, having blocking calls is one of the common roadblocks on our way to better scalability.

We have a blocking call when our call is waiting for a result from the database. Usually a call from our code to another part of the application isn't a blocking call, but a vast majority of the code interacts with either the operating system, a database or a remote service.

The web application runs inside a process that spawns a limited number of threads. When an HTTP request arrives to the server, the application process redirects the request to a free thread.

As you can see, until the thread has returned a response, it is flagged as busy (locked). If a new request arrives to the process while the thread is busy, it is redirected to one of other free threads.

If the request load is so high that all the threads are busy, the process returns a server too busy error. A thread is busy while it executes its associated code. Some of this code is CPU bound, which means that it involves some calculations or data manipulations in memory; however, some of this code is I/O bound, which means that it requests something outside its own process.

During this out of process request, the thread is waiting for the result. The thread is marked as blocked thus unable to contribute to the available thread pool for the web application.

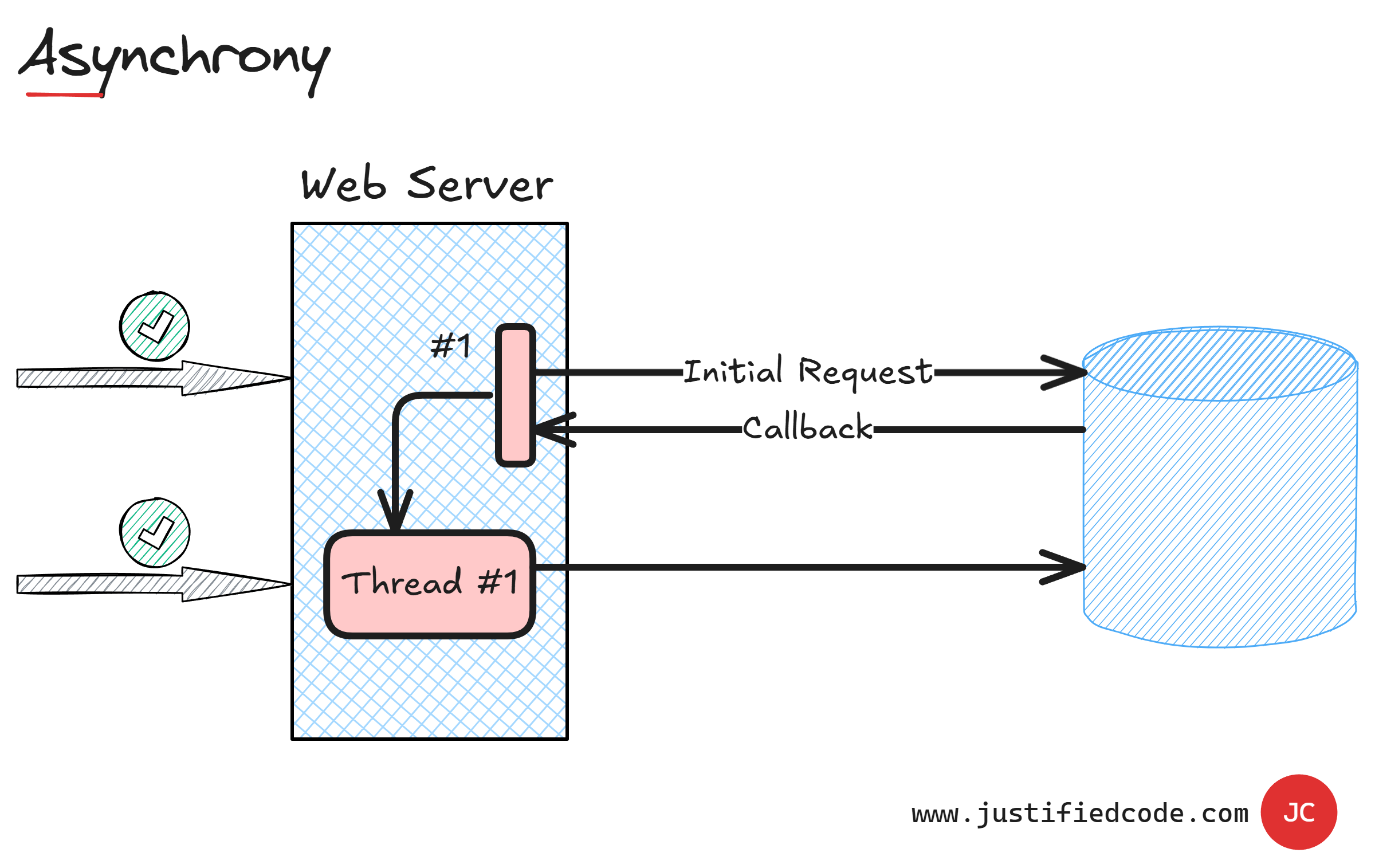

Asynchronous Calls

The solution is to write asynchronous code if the programming language supports it. The async call is broken into two parts: the initial request and the callback.

As you see, the initial request thread #1 is forwarded to the component together with a callback address. It tells the component where to deliver the result. When the result is ready, the callback signals an event and the calling thread is picking up the result. During the wait, thread #1 is idle and ready to attend another request.

Multi-threading v. Asynchrony

There is some misunderstanding between multi-threading and asynchrony.

Asynchrony can happen in a single thread or in multiple threads. A browser JavaScript engine runs in a single thread; however, the events are handled asynchronously.

When we use async requests from JavaScript, the initial request is done and then the executing thread is freed to attend another JavaScript code. When the async http response arrives, the callback is signaled and the thread picks up the response contents as soon as it's free to do so.

A single thread lifetime is sliced into idle and running portions and the asynchrony enables the single thread to do more than one outbound request at the same time.

While asynchrony is focused on how to avoid waiting on the result from another place, multi-threading or parallelism is about how to distribute and coordinate the CPU work between many available threads.

While asynchrony is beneficial in many places, it comes with a price tag. Asynchronous code is more complex to follow and to understand than in a synchronous code.

One last note, Asynchrony is helpful mostly when there is a wait on another component such as the operating system or the database. In other words, it is mostly helpful on I/O bound requests.

If the operation is CPU intensive, the asynchrony doesn't help us that much. CPU intensive operations require processor time and while the calling thread is free to attend another request, the reduced CPU availability makes it less useful as there are few available slots once it begins serving them.

Next Steps

We've seen the effect of synchronous calls to the database or remote services. The calling thread is blocked and unable to do anything else than wait for the result.

We have also seen how asynchronous calls can enable the application to use the threads in a more efficient manner.

In the next article we will see how to isolate the calls from one application subsystem to another application subsystem using Queueing. In case you don't want to wait, head to the Web Applications Scalability course where I break all the 5 components of scalability down while annotating every neat diagram with my iPad Pencil, sharing raw experience from the field you won’t find anywhere else.