- Jul 3, 2025

Is gRPC Justified?

Our team recently faced a tough decision while optimizing a mobile financial app’s backend. We had a hard 200 ms response time goal to meet. At the same time, banking regulations forced us into a segregated three-tier network architecture that added unavoidable latency.

To claw back every millisecond possible, we decided to change how our internal services communicate. Instead of the typical JSON over REST, we bet on gRPC with Protocol Buffers. Here’s why that choice was justified.

A Strict Latency Goal in a Three-Tier Architecture

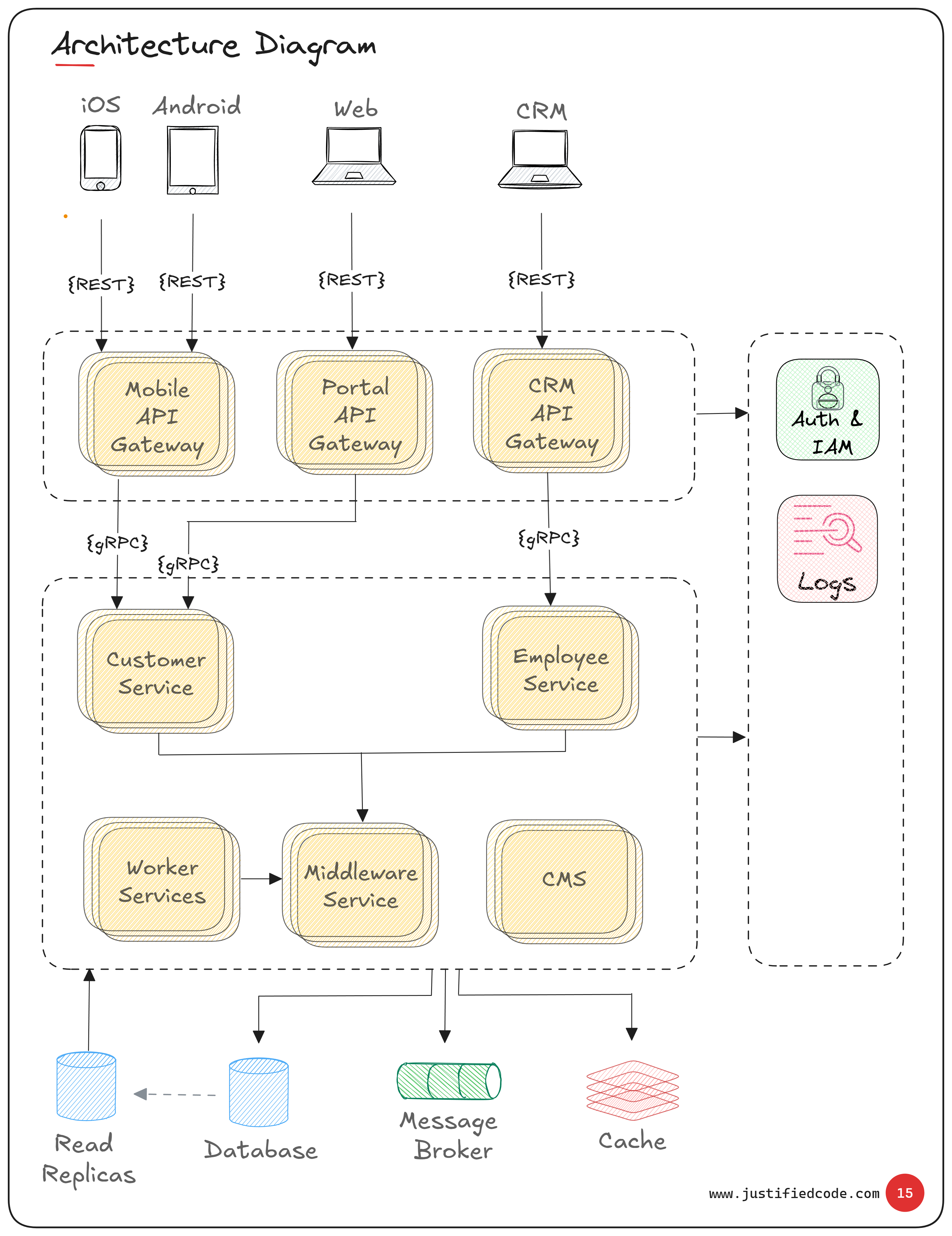

As illustrated in the architecture diagram, our fintech system operates within a tightly regulated three-tier network (public, app, and data subnets).

The public subnet hosts the API gateways that mobile clients hit; the app subnet contains our backend services; and the data subnet houses databases, caches, and message brokers.

Each subnet is isolated, meaning every call between these layers incurs extra network overhead (firewalls, routing delays).

With an end-to-end response time of 200 ms, this enforced network separation posed a serious performance challenge.

Choosing gRPC Over REST

We had two options for communication between the API gateway (in the public tier) and the internal services (in the app tier): stick with JSON over RESTful HTTP, or move to gRPC using Protocol Buffers.

We chose gRPC for our internal service calls (while keeping the client-facing API as REST for compatibility). The reason was straightforward: Protobuf’s binary encoding is far more efficient than JSON’s text-based format.

Smaller, binary messages mean less data to send over the network and faster transmission, and Protobuf packs/unpacks data much faster than JSON parsing .

In fact, benchmarks show Protobuf can serialize and deserialize data 4–6× faster than JSON while producing significantly smaller payloads .

In a system where every millisecond counts, gRPC gave us the edge we needed to stay within our 200 ms budget.

Documenting the why

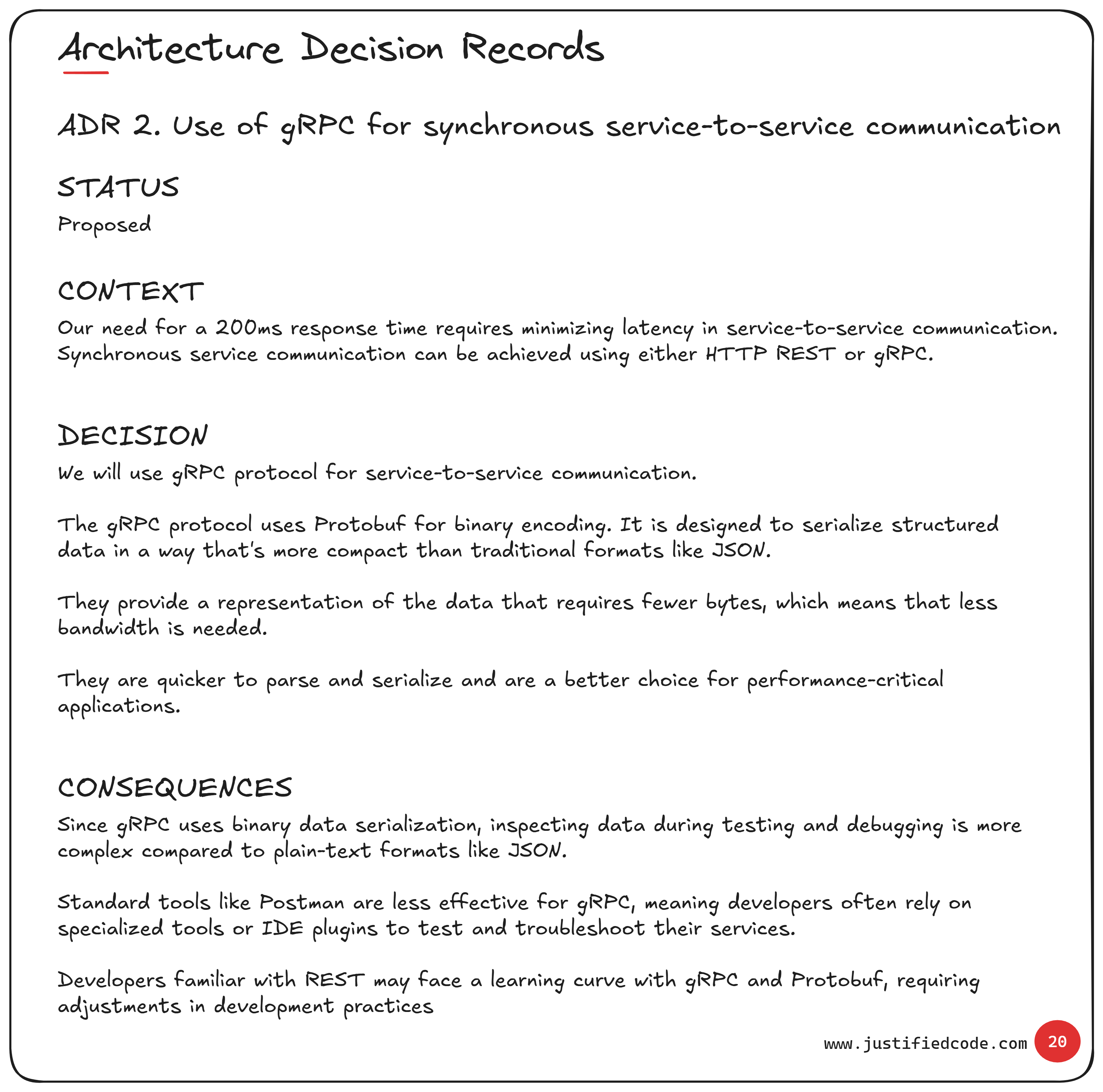

We formally documented this decision in this internal Architecture Decision Record (ADR).

The ADR outlines the context of our problem (the 200 ms requirement and multi-subnet constraints), the alternatives considered (REST/JSON vs. gRPC/Protobuf), and why we ultimately chose gRPC.

It also captures the consequences of this choice, noting the necessary concessions (like added complexity).

By recording the decision, we ensure any future team members understand why gRPC was selected, how it met our performance goals, and what trade-offs were accepted.

The tradeoffs: Complexity for Performance

Adopting gRPC wasn’t without downsides. Debugging and inspecting data became more challenging. Unlike plain JSON text, you can’t simply read the raw messages on the wire or in logs, since Protobuf data is binary and not human-readable.

Our team had to adjust our tooling: we use command-line utilities and IDE plugins to test and debug APIs, because tools like Postman aren’t as handy with gRPC.

There’s also a learning curve. Engineers familiar with REST had to get up to speed with writing .proto schemas, learning gRPC conventions, and using code-generation for client/server stubs.

These overheads are the price of gRPC’s speed. We accepted them, given that meeting the 200 ms SLA was paramount.

Conclusion

In the end, complexity must be justified. With our tight 200 ms response target and a network architecture working against us, the efficiency gains from gRPC and Protobuf made the difference between hitting our SLA and missing it.

Yes, we introduced extra complexity, but that trade-off was worth it to achieve the performance our financial app demanded.

When raw speed is a non-negotiable requirement, gRPC delivers in a way that REST/JSON simply couldn’t in our context.

Related Materials

Get exclusive deep dives, private notes, behind-the-scenes thinking, and raw experience from the field. Exclusive insights from the mind of a pragmatic architect.

If you're designing systems like the ones discussed here, this toolbox might help.

- Free email delivery